Oracle-Listener log解读_oracle listener.log-程序员宅基地

技术标签: oracle log 日志监听文件 【Oracle基础】 数据库 listener-l

Listener log 概述

在ORACLE数据库中,如果不对监听日志文件(listener.log)进行截断,那么监听日志文件(listener.log)会变得越来越大.

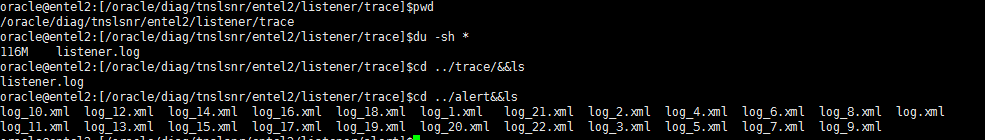

Listener log location

For oracle 9i/10g

在下面的目录下:

$ORACLE_HOME/network/log/listener_$ORACLE_SID.log

For oracle 11g/12c

在下面的目录下:

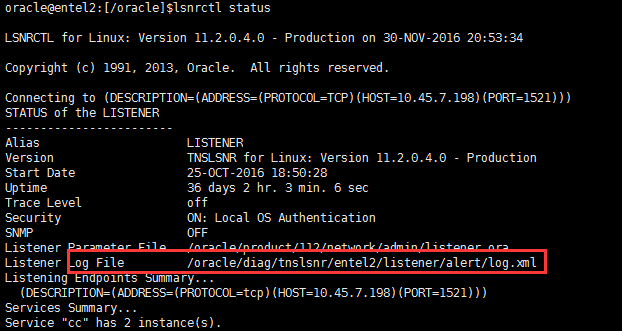

$ORACLE_BASE/diag/tnslsnr/主机名称/listener/trace/listener.log或者通过 lsnrctl status 也可以查看位置

这里展示的是 xml格式的日志,跟.log并无区别。

或者11g可以通过 adrci 命令

oracle@entel2:[/oracle]$adrci

ADRCI: Release 11.2.0.4.0 - Production on Wed Nov 30 20:56:28 2016

Copyright (c) 1982, 2011, Oracle and/or its affiliates. All rights reserved.

ADR base = "/oracle"

adrci> help --help可以看帮助命令。输入help show alert,可以看到show alert的详细用法

HELP [topic]

Available Topics:

CREATE REPORT

ECHO

EXIT

HELP

HOST

IPS

PURGE

RUN

SET BASE

SET BROWSER

SET CONTROL

SET ECHO

SET EDITOR

SET HOMES | HOME | HOMEPATH

SET TERMOUT

SHOW ALERT

SHOW BASE

SHOW CONTROL

SHOW HM_RUN

SHOW HOMES | HOME | HOMEPATH

SHOW INCDIR

SHOW INCIDENT

SHOW PROBLEM

SHOW REPORT

SHOW TRACEFILE

SPOOL

There are other commands intended to be used directly by Oracle, type

"HELP EXTENDED" to see the list

adrci> show alert --显示alert信息

Choose the alert log from the following homes to view:

1: diag/clients/user_oracle/host_880756540_80

2: diag/tnslsnr/procsdb2/listener_cc

3: diag/tnslsnr/entel2/sid_list_listener

4: diag/tnslsnr/entel2/listener_rb

5: diag/tnslsnr/entel2/listener

6: diag/tnslsnr/entel2/listener_cc

7: diag/tnslsnr/procsdb1/listener_rb

8: diag/rdbms/ccdg/ccdg

9: diag/rdbms/rb/rb

10: diag/rdbms/cc/cc

Q: to quit

Please select option: 5 --输入数字,查看对应日志

Output the results to file: /tmp/alert_13187_1397_listener_3.ado

2016-06-27 09:15:45.164000 -04:00

Create Relation ADR_CONTROL

Create Relation ADR_INVALIDATION

Create Relation INC_METER_IMPT_DEF

2016-06-27 09:15:46.444000 -04:00

Create Relation INC_METER_PK_IMPTS

System parameter file is /oracle/product/112/network/admin/listener.ora

Log messages written to /oracle/diag/tnslsnr/entel2/listener/alert/log.xml

Trace information written to /oracle/diag/tnslsnr/entel2/listener/trace/ora_16175_140656975550208.trc

Trace level is currently 0

Started with pid=16175

Listening on: (DESCRIPTION=(ADDRESS=(PROTOCOL=tcp)(HOST=10.45.7.198)(PORT=1521)))

Listening on: (DESCRIPTION=(ADDRESS=(PROTOCOL=tcp)(HOST=192.168.123.1)(PORT=1521)))

Listener completed notification to CRS on start

......

......

......

Listener log日志文件清理

需要对监听日志文件(listener.log)进行定期清理。

1:监听日志文件(listener.log)变得越来越大,占用额外的存储空间

2:监听日志文件(listener.log)变得太大会带来一些问题,查找起来也相当麻烦

3:监听日志文件(listener.log)变得太大,给写入、查看带来的一些性能问题、麻烦

定期对监听日志文件(listener.log)进行清理,另外一种说法叫截断日志文件。

列举一个错误的做法

oracle@entel2:[/oracle]$mv listener.log listener.log.20161201

oracle@entel2:[/oracle]$cp /dev/null listener.log

oracle@entel2:[/oracle]$more listener.log如上所示,这样截断监听日志(listener.log)后,监听服务进程(tnslsnr)并不会将新的监听信息写入listener.log,而是继续写入listener.log.20161201

正确的做法

1:首先停止监听服务进程(tnslsnr)记录日志。

oracle@entel2:[/oracle]$lsnrctl set log_status off2:将监听日志文件(listener.log)复制一份,以listener.log.yyyymmdd格式命名

oracle@entel2:[/oracle]$cp listener.log listener.log.201612013:将监听日志文件(listener.log)清空。清空文件的方法有很多

oracle@entel2:[/oracle]$echo “” > listener.log

或者

oracle@entel2:[/oracle]$cp /dev/null listener.log

或者

oracle@entel2:[/oracle]$echo /dev/null > listener.log

或者

oracle@entel2:[/oracle]$>listener.log4:开启监听服务进程(tnslsnr)记录日志

oracle@entel2:[/oracle]$lsnrctl set log_status on当然也可以移走监听日志文件(listener.log),数据库实例会自动创建一个listener.log文件。

oracle@entel2:[/oracle]$ lsnrctl set log_status off

oracle@entel2:[/oracle]$mv listener.log listener.yyyymmdd

oracle@entel2:[/oracle]$lsnrctl set log_status on

清理shell脚本

当然这些操作应该通过shell脚本来处理,然后结合crontab作业定期清理、截断监听日志文件。

简单一点的(核心部分)

rq=` date +"%d" `

cp $ORACLE_HOME/network/log/listener.log $ORACLE_BACKUP/network/log/listener_$rq.log

su - oracle -c "lsnrctl set log_status off"

cp /dev/null $ORACLE_HOME/network/log/listener.log

su - oracle -c "lsnrctl set log_status on"

这样的脚本还没有解决一个问题,就是截断的监听日志文件保留多久的问题。比如我只想保留这些截断的监听日志一个月时间,我希望作业自动维护。不需要我去手工操作。有这样一个脚本cls_oracle.sh可以完全做到这个,当然它还会归档、清理其它日志文件,例如告警文件(alert_sid.log)等等。功能非常强大。

#!/bin/bash

#

# Script used to cleanup any Oracle environment.

#

# Cleans: audit_log_dest

# background_dump_dest

# core_dump_dest

# user_dump_dest

#

# Rotates: Alert Logs

# Listener Logs

#

# Scheduling: 00 00 * * * /home/oracle/_cron/cls_oracle/cls_oracle.sh -d 31 > /home/oracle/_cron/cls_oracle/cls_oracle.log 2>

&1

#

# Created By: Tommy Wang 2012-09-10

#

# History:

#

RM="rm -f"

RMDIR="rm -rf"

LS="ls -l"

MV="mv"

TOUCH="touch"

TESTTOUCH="echo touch"

TESTMV="echo mv"

TESTRM=$LS

TESTRMDIR=$LS

SUCCESS=0

FAILURE=1

TEST=0

HOSTNAME=`hostname`

ORAENV="oraenv"

TODAY=`date +%Y%m%d`

ORIGPATH=/usr/local/bin:$PATH

ORIGLD=$LD_LIBRARY_PATH

export PATH=$ORIGPATH

# Usage function.

f_usage(){

echo "Usage: `basename $0` -d DAYS [-a DAYS] [-b DAYS] [-c DAYS] [-n DAYS] [-r DAYS] [-u DAYS] [-t] [-h]"

echo " -d = Mandatory default number of days to keep log files that are not explicitly passed as parameters."

echo " -a = Optional number of days to keep audit logs."

echo " -b = Optional number of days to keep background dumps."

echo " -c = Optional number of days to keep core dumps."

echo " -n = Optional number of days to keep network log files."

echo " -r = Optional number of days to keep clusterware log files."

echo " -u = Optional number of days to keep user dumps."

echo " -h = Optional help mode."

echo " -t = Optional test mode. Does not delete any files."

}

if [ $# -lt 1 ]; then

f_usage

exit $FAILURE

fi

# Function used to check the validity of days.

f_checkdays(){

if [ $1 -lt 1 ]; then

echo "ERROR: Number of days is invalid."

exit $FAILURE

fi

if [ $? -ne 0 ]; then

echo "ERROR: Number of days is invalid."

exit $FAILURE

fi

}

# Function used to cut log files.

f_cutlog(){

# Set name of log file.

LOG_FILE=$1

CUT_FILE=${LOG_FILE}.${TODAY}

FILESIZE=`ls -l $LOG_FILE | awk '{print $5}'`

# Cut the log file if it has not been cut today.

if [ -f $CUT_FILE ]; then

echo "Log Already Cut Today: $CUT_FILE"

elif [ ! -f $LOG_FILE ]; then

echo "Log File Does Not Exist: $LOG_FILE"

elif [ $FILESIZE -eq 0 ]; then

echo "Log File Has Zero Size: $LOG_FILE"

else

# Cut file.

echo "Cutting Log File: $LOG_FILE"

$MV $LOG_FILE $CUT_FILE

$TOUCH $LOG_FILE

fi

}

# Function used to delete log files.

f_deletelog(){

# Set name of log file.

CLEAN_LOG=$1

# Set time limit and confirm it is valid.

CLEAN_DAYS=$2

f_checkdays $CLEAN_DAYS

# Delete old log files if they exist.

find $CLEAN_LOG.[0-9][0-9][0-9][0-9][0-9][0-9][0-9][0-9] -type f -mtime +$CLEAN_DAYS -exec $RM {} \; 2>/dev/null

}

# Function used to get database parameter values.

f_getparameter(){

if [ -z "$1" ]; then

return

fi

PARAMETER=$1

sqlplus -s /nolog <<EOF | awk -F= "/^a=/ {print \$2}"

set head off pagesize 0 feedback off linesize 200

whenever sqlerror exit 1

conn / as sysdba

select 'a='||value from v\$parameter where name = '$PARAMETER';

EOF

}

# Function to get unique list of directories.

f_getuniq(){

if [ -z "$1" ]; then

return

fi

ARRCNT=0

MATCH=N

x=0

for e in `echo $1`; do

if [ ${#ARRAY[*]} -gt 0 ]; then

# See if the array element is a duplicate.

while [ $x -lt ${#ARRAY[*]} ]; do

if [ "$e" = "${ARRAY[$x]}" ]; then

MATCH=Y

fi

done

fi

if [ "$MATCH" = "N" ]; then

ARRAY[$ARRCNT]=$e

ARRCNT=`expr $ARRCNT+1`

fi

x=`expr $x + 1`

done

echo ${ARRAY[*]}

}

# Parse the command line options.

while getopts a:b:c:d:n:r:u:th OPT; do

case $OPT in

a) ADAYS=$OPTARG

;;

b) BDAYS=$OPTARG

;;

c) CDAYS=$OPTARG

;;

d) DDAYS=$OPTARG

;;

n) NDAYS=$OPTARG

;;

r) RDAYS=$OPTARG

;;

u) UDAYS=$OPTARG

;;

t) TEST=1

;;

h) f_usage

exit 0

;;

*) f_usage

exit 2

;;

esac

done

shift $(($OPTIND - 1))

# Ensure the default number of days is passed.

if [ -z "$DDAYS" ]; then

echo "ERROR: The default days parameter is mandatory."

f_usage

exit $FAILURE

fi

f_checkdays $DDAYS

echo "`basename $0` Started `date`."

# Use test mode if specified.

if [ $TEST -eq 1 ]

then

RM=$TESTRM

RMDIR=$TESTRMDIR

MV=$TESTMV

TOUCH=$TESTTOUCH

echo "Running in TEST mode."

fi

# Set the number of days to the default if not explicitly set.

ADAYS=${ADAYS:-$DDAYS}; echo "Keeping audit logs for $ADAYS days."; f_checkdays $ADAYS

BDAYS=${BDAYS:-$DDAYS}; echo "Keeping background logs for $BDAYS days."; f_checkdays $BDAYS

CDAYS=${CDAYS:-$DDAYS}; echo "Keeping core dumps for $CDAYS days."; f_checkdays $CDAYS

NDAYS=${NDAYS:-$DDAYS}; echo "Keeping network logs for $NDAYS days."; f_checkdays $NDAYS

RDAYS=${RDAYS:-$DDAYS}; echo "Keeping clusterware logs for $RDAYS days."; f_checkdays $RDAYS

UDAYS=${UDAYS:-$DDAYS}; echo "Keeping user logs for $UDAYS days."; f_checkdays $UDAYS

# Check for the oratab file.

if [ -f /var/opt/oracle/oratab ]; then

ORATAB=/var/opt/oracle/oratab

elif [ -f /etc/oratab ]; then

ORATAB=/etc/oratab

else

echo "ERROR: Could not find oratab file."

exit $FAILURE

fi

# Build list of distinct Oracle Home directories.

OH=`egrep -i ":Y|:N" $ORATAB | grep -v "^#" | grep -v "\*" | cut -d":" -f2 | sort | uniq`

# Exit if there are not Oracle Home directories.

if [ -z "$OH" ]; then

echo "No Oracle Home directories to clean."

exit $SUCCESS

fi

# Get the list of running databases.

SIDS=`ps -e -o args | grep pmon | grep -v grep | awk -F_ '{print $3}' | sort`

# Gather information for each running database.

for ORACLE_SID in `echo $SIDS`

do

# Set the Oracle environment.

ORAENV_ASK=NO

export ORACLE_SID

. $ORAENV

if [ $? -ne 0 ]; then

echo "Could not set Oracle environment for $ORACLE_SID."

else

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:$ORIGLD

ORAENV_ASK=YES

echo "ORACLE_SID: $ORACLE_SID"

# Get the audit_dump_dest.

ADUMPDEST=`f_getparameter audit_dump_dest`

if [ ! -z "$ADUMPDEST" ] && [ -d "$ADUMPDEST" 2>/dev/null ]; then

echo " Audit Dump Dest: $ADUMPDEST"

ADUMPDIRS="$ADUMPDIRS $ADUMPDEST"

fi

# Get the background_dump_dest.

BDUMPDEST=`f_getparameter background_dump_dest`

echo " Background Dump Dest: $BDUMPDEST"

if [ ! -z "$BDUMPDEST" ] && [ -d "$BDUMPDEST" ]; then

BDUMPDIRS="$BDUMPDIRS $BDUMPDEST"

fi

# Get the core_dump_dest.

CDUMPDEST=`f_getparameter core_dump_dest`

echo " Core Dump Dest: $CDUMPDEST"

if [ ! -z "$CDUMPDEST" ] && [ -d "$CDUMPDEST" ]; then

CDUMPDIRS="$CDUMPDIRS $CDUMPDEST"

fi

# Get the user_dump_dest.

UDUMPDEST=`f_getparameter user_dump_dest`

echo " User Dump Dest: $UDUMPDEST"

if [ ! -z "$UDUMPDEST" ] && [ -d "$UDUMPDEST" ]; then

UDUMPDIRS="$UDUMPDIRS $UDUMPDEST"

fi

fi

done

# Do cleanup for each Oracle Home.

for ORAHOME in `f_getuniq "$OH"`

do

# Get the standard audit directory if present.

if [ -d $ORAHOME/rdbms/audit ]; then

ADUMPDIRS="$ADUMPDIRS $ORAHOME/rdbms/audit"

fi

# Get the Cluster Ready Services Daemon (crsd) log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/crsd ]; then

CRSLOGDIRS="$CRSLOGDIRS $ORAHOME/log/$HOSTNAME/crsd"

fi

# Get the Oracle Cluster Registry (OCR) log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/client ]; then

OCRLOGDIRS="$OCRLOGDIRS $ORAHOME/log/$HOSTNAME/client"

fi

# Get the Cluster Synchronization Services (CSS) log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/cssd ]; then

CSSLOGDIRS="$CSSLOGDIRS $ORAHOME/log/$HOSTNAME/cssd"

fi

# Get the Event Manager (EVM) log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/evmd ]; then

EVMLOGDIRS="$EVMLOGDIRS $ORAHOME/log/$HOSTNAME/evmd"

fi

# Get the RACG log directory if present.

if [ -d $ORAHOME/log/$HOSTNAME/racg ]; then

RACGLOGDIRS="$RACGLOGDIRS $ORAHOME/log/$HOSTNAME/racg"

fi

done

# Clean the audit_dump_dest directories.

if [ ! -z "$ADUMPDIRS" ]; then

for DIR in `f_getuniq "$ADUMPDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning Audit Dump Directory: $DIR"

find $DIR -type f -name "*.aud" -mtime +$ADAYS -exec $RM {} \; 2>/dev/null

fi

done

fi

# Clean the background_dump_dest directories.

if [ ! -z "$BDUMPDIRS" ]; then

for DIR in `f_getuniq "$BDUMPDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning Background Dump Destination Directory: $DIR"

# Clean up old trace files.

find $DIR -type f -name "*.tr[c,m]" -mtime +$BDAYS -exec $RM {} \; 2>/dev/null

find $DIR -type d -name "cdmp*" -mtime +$BDAYS -exec $RMDIR {} \; 2>/dev/null

fi

if [ -d $DIR ]; then

# Cut the alert log and clean old ones.

for f in `find $DIR -type f -name "alert\_*.log" ! -name "alert_[0-9A-Z]*.[0-9]*.log" 2>/dev/null`; do

echo "Alert Log: $f"

f_cutlog $f

f_deletelog $f $BDAYS

done

fi

done

fi

# Clean the core_dump_dest directories.

if [ ! -z "$CDUMPDIRS" ]; then

for DIR in `f_getuniq "$CDUMPDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning Core Dump Destination: $DIR"

find $DIR -type d -name "core*" -mtime +$CDAYS -exec $RMDIR {} \; 2>/dev/null

fi

done

fi

# Clean the user_dump_dest directories.

if [ ! -z "$UDUMPDIRS" ]; then

for DIR in `f_getuniq "$UDUMPDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning User Dump Destination: $DIR"

find $DIR -type f -name "*.trc" -mtime +$UDAYS -exec $RM {} \; 2>/dev/null

fi

done

fi

# Cluster Ready Services Daemon (crsd) Log Files

for DIR in `f_getuniq "$CRSLOGDIRS $OCRLOGDIRS $CSSLOGDIRS $EVMLOGDIRS $RACGLOGDIRS"`; do

if [ -d $DIR ]; then

echo "Cleaning Clusterware Directory: $DIR"

find $DIR -type f -name "*.log" -mtime +$RDAYS -exec $RM {} \; 2>/dev/null

fi

done

# Clean Listener Log Files.

# Get the list of running listeners. It is assumed that if the listener is not running, the log file does not need to be cut.

ps -e -o args | grep tnslsnr | grep -v grep | while read LSNR; do

# Derive the lsnrctl path from the tnslsnr process path.

TNSLSNR=`echo $LSNR | awk '{print $1}'`

ORACLE_PATH=`dirname $TNSLSNR`

ORACLE_HOME=`dirname $ORACLE_PATH`

PATH=$ORACLE_PATH:$ORIGPATH

LD_LIBRARY_PATH=$ORACLE_HOME/lib:$ORIGLD

LSNRCTL=$ORACLE_PATH/lsnrctl

echo "Listener Control Command: $LSNRCTL"

# Derive the listener name from the running process.

LSNRNAME=`echo $LSNR | awk '{print $2}' | tr "[:upper:]" "[:lower:]"`

echo "Listener Name: $LSNRNAME"

# Get the listener version.

LSNRVER=`$LSNRCTL version | grep "LSNRCTL" | grep "Version" | awk '{print $5}' | awk -F. '{print $1}'`

echo "Listener Version: $LSNRVER"

# Get the TNS_ADMIN variable.

echo "Initial TNS_ADMIN: $TNS_ADMIN"

unset TNS_ADMIN

TNS_ADMIN=`$LSNRCTL status $LSNRNAME | grep "Listener Parameter File" | awk '{print $4}'`

if [ ! -z $TNS_ADMIN ]; then

export TNS_ADMIN=`dirname $TNS_ADMIN`

else

export TNS_ADMIN=$ORACLE_HOME/network/admin

fi

echo "Network Admin Directory: $TNS_ADMIN"

# If the listener is 11g, get the diagnostic dest, etc...

if [ $LSNRVER -ge 11 ]; then

# Get the listener log file directory.

LSNRDIAG=`$LSNRCTL<<EOF | grep log_directory | awk '{print $6}'

set current_listener $LSNRNAME

show log_directory

EOF`

echo "Listener Diagnostic Directory: $LSNRDIAG"

# Get the listener trace file name.

LSNRLOG=`lsnrctl<<EOF | grep trc_directory | awk '{print $6"/"$1".log"}'

set current_listener $LSNRNAME

show trc_directory

EOF`

echo "Listener Log File: $LSNRLOG"

# If 10g or lower, do not use diagnostic dest.

else

# Get the listener log file location.

LSNRLOG=`$LSNRCTL status $LSNRNAME | grep "Listener Log File" | awk '{print $4}'`

fi

# See if the listener is logging.

if [ -z "$LSNRLOG" ]; then

echo "Listener Logging is OFF. Not rotating the listener log."

# See if the listener log exists.

elif [ ! -r "$LSNRLOG" ]; then

echo "Listener Log Does Not Exist: $LSNRLOG"

# See if the listener log has been cut today.

elif [ -f $LSNRLOG.$TODAY ]; then

echo "Listener Log Already Cut Today: $LSNRLOG.$TODAY"

# Cut the listener log if the previous two conditions were not met.

else

# Remove old 11g+ listener log XML files.

if [ ! -z "$LSNRDIAG" ] && [ -d "$LSNRDIAG" ]; then

echo "Cleaning Listener Diagnostic Dest: $LSNRDIAG"

find $LSNRDIAG -type f -name "log\_[0-9]*.xml" -mtime +$NDAYS -exec $RM {} \; 2>/dev/null

fi

# Disable logging.

$LSNRCTL <<EOF

set current_listener $LSNRNAME

set log_status off

EOF

# Cut the listener log file.

f_cutlog $LSNRLOG

# Enable logging.

$LSNRCTL <<EOF

set current_listener $LSNRNAME

set log_status on

EOF

# Delete old listener logs.

f_deletelog $LSNRLOG $NDAYS

fi

done

echo "`basename $0` Finished `date`."

exit

在crontab中设置一个作业,每天晚上凌晨零点运行这个脚本,日志文件保留31天。

00 00 * * * /home/oracle/_cron/cls_oracle/cls_oracle.sh -d 31 > /home/oracle/_cron/cls_oracle/cls_oracle.sh.log 2>&1 智能推荐

基于Webrtc和Janus的多人视频会议系统开发6 - 从Janus服务器订阅媒体流_springboot webrtc janus 视频会议-程序员宅基地

文章浏览阅读3.2k次。由于前段时间一直忙于开发,没有及时记录开发过程中遇到的问题,现在只能靠回忆来写一些印象深刻的坑了,本篇文章先把本系列的最后一篇补上,前面只是做到了把流推上去,现在还需要把流订阅下来。记得当时是遇到几个问题的,其中一个是订阅其他流后,自己发布的视频就没有声音了,其他问题已经记不清楚了,大家如果遇到什么问题说下说不定能帮助想起了。感接时有点疑惑,推流时已经建立了peerconnection,收..._springboot webrtc janus 视频会议

AngularJs应用页面切换优化方案_angularjs路由跳转太慢-程序员宅基地

文章浏览阅读666次。前言AngularJs被用来开发单页面应用程序(SPA),利用AJAX调用配合页面的局部刷新,可以减少页面跳转,从而获得更好的用户体验。Angular的ngView及其对应的强大路由机制,是实现SPA应用的核心模块。本文所说的页面切换指的就是这个路由机制,即根据不同的url展示不同的视图。有一种非常常见的场景:在切换至新页面后,需要通过AJAX调用从服务器请求一些数据,然后根据这些_angularjs路由跳转太慢

transformer模型_transformer分类模型-程序员宅基地

文章浏览阅读7.1k次,点赞3次,收藏22次。转自:https://www.bilibili.com/video/BV1Mt411J734https://github.com/aespresso/a_journey_into_math_of_ml说到自然语言处理, 语言模型, 命名实体识别, 机器翻译, 可能很多人想到的LSTM等循环神经网络, 但目前其实LSTM起码在自然语言处理领域已经过时了, 在Stanford阅读理解数据集(SQuAD2.0)榜单里, 机器的成绩已经超人类表现, 这很大程度要归功于transformer的BERT预训练模._transformer分类模型

redhat下的交叉编译安装-程序员宅基地

文章浏览阅读108次。1. 下载arm-linux-gcc-3.4.1.tar.bz2到系统的顶层目录下2. 解压 arm-linux-gcc-3.4.1.tar.bz2 #tar -jxvf arm-linux-gcc-3.4.1.tar.bz2 解压过程需要一段时间,解压后的文件形成了 usr/local/ 文件夹,进入该文件夹,将 arm文件夹拷贝到/usr/local/下 # cd usr/loca..._redhat 交叉编译安装

MacBook Pro如何安装Windows 11(非虚拟机)_macbookpro安装win11-程序员宅基地

文章浏览阅读2.1w次。准备工具:可正常上网的MacBook Pro(基于intel的CPU)1:如果之前安装了Windows想安装Windows 11,请先备份数据。如果之前没有安装过Windows,请空降第四步2:跳转至Mac系统关机长按“option“,选择磁盘:MacHD3:清除原先安装的Windows到Mac界面后:启动台-工具-启动转换助理,打开-继续-恢复分区(输入本机管理员密码)一定要先备份好数据!!!4:下载映像打开内置浏览器至下载 Windows 10下载官方系统映像(64位ISO文_macbookpro安装win11

NotePad2轻便够用的文本编辑器_文本编辑器notepad2-程序员宅基地

文章浏览阅读344次,点赞8次,收藏10次。NotePad2轻便够用的文本编辑器,可以拖拽打开文本,和notepad++的功能差不多,可以平行替代。_文本编辑器notepad2

随便推点

java.lang.module.FindException: Module not found-程序员宅基地

文章浏览阅读3.6k次。2 用工具自动创建 module-info.java,自己建会出错,项目目录上右键 configure -> create module-info.java。出现这个原因一般是module-info.java 的问题。1 删除 module-info.java。找了很多没什么好的解决方案。_java.lang.module.findexception

linux锁定用户的作用,【Linux】用户的锁定和解锁-passwd的特殊用法-程序员宅基地

文章浏览阅读1k次。文章目录前言前面我们已经介绍过了passwd的使用,今天我们再来看一些特殊的用法查看帮助[root@zmedu-17~]#passwd--help用法:passwd[选项...]-k,--keep-tokens保持身份验证令牌不过期-d,--delete删除已命名帐号的密码(只有根用户才能进行此操作)-l,--lock..._linux passwd --lock

python compiler.ast_Python Ast介绍及应用-程序员宅基地

文章浏览阅读709次。Abstract Syntax Trees即抽象语法树。Ast是python源码到字节码的一种中间产物,借助ast模块可以从语法树的角度分析源码结构。此外,我们不仅可以修改和执行语法树,还可以将Source生成的语法树unparse成python源码。因此ast给python源码检查、语法分析、修改代码以及代码调试等留下了足够的发挥空间。1. AST简介Python官方提供的CPython解释器对..._compiler.ast

java实现带HTML代码的文章摘要截取-程序员宅基地

文章浏览阅读474次。不知大家是否已经注意到个人知客首页和列表页的文章已经实现了部分摘要内容的显示呢?这个看似简单的功能其实给我添了不少麻烦的说,前几天终于解决了,现在和大家一起分享一下经验,嘿嘿~~普通的纯文本文字截取,大家想必已经很熟悉了,java.lang.String.String(byte[] arg0, int arg1, int arg2)就可以了,jsp里substring也能解决,但是..._html转摘要java

基于SSD1306的OLED的驱动学习(一):SSD中文命令表(搬运)_ssd1306中文手册-程序员宅基地

文章浏览阅读2.5k次,点赞4次,收藏18次。SSD1306命令命令表单(D/C#=0, R/W#(WR#) = 0, E(RD#=1) 特殊状态除外)基本命令 D/C Hex D7 D6 D5 D4 D3 D2 D1 D0 命令 描述 0 81._ssd1306中文手册

TCP协议详解_sms、tcp/ip-程序员宅基地

文章浏览阅读8.2k次,点赞13次,收藏142次。TCP协议详解TCP服务的特点TCP头部结构TCP连接的建立和关闭(三次握手和四次挥手)TCP状态转移服务器端的状态转移过程客户端的的状态转移过程TIME_WAIT 状态超时重传拥塞控制TCP协议属于传输层协议。从通信和信息处理角度看,它属于面向通信部分的最高层,只有位于网络边缘的主机的协议栈才有传输层协议;同时也是用户功能中的最低层,一些重要的socket选项都和TCP协议相关。TCP服务的特点传输层协议主要有两个: TCP 协议和UDP协议。TCP协议相对于UDP协议的特点是:面向连接、字节流和可_sms、tcp/ip